When Kids Play 'Who is the Hacker': How a Simple Game Reveals Our Hidden Biases

"Why does the computer think everyone with a beanie is suspicious?" asked a 13-year-old in my class. In that moment, he understood algorithmic bias much better than many adults I know.

The Birth of a Bias-Teaching Game

As I built the curriculum for my AI and Ethics class, when researching, I watched Joy Buolamwini's Gender Shades project unveiling how earlier facial recognition systems failed on darker-skinned faces. It's historical and not as prevalent in the newer models, but nevertheless it was one of the easiest ways to understand bias. But the problem was to explain algorithmic bias to children? How do you make visible the invisible prejudices that shape our AI systems?

Eventually, the answer came through play. And, I built a game.

How the Game Works

The game has two roles, each teaching different lessons:

The Security Guard: Training Your Own Bias

Players start as security guards, rapidly sorting through faces to identify who looks "suspicious." With only 2 minutes on the clock, they make split-second decisions, like real security systems do.

What they don't realize initially is that they're training an AI system with every choice. Their snap judgments become the algorithm's worldview.

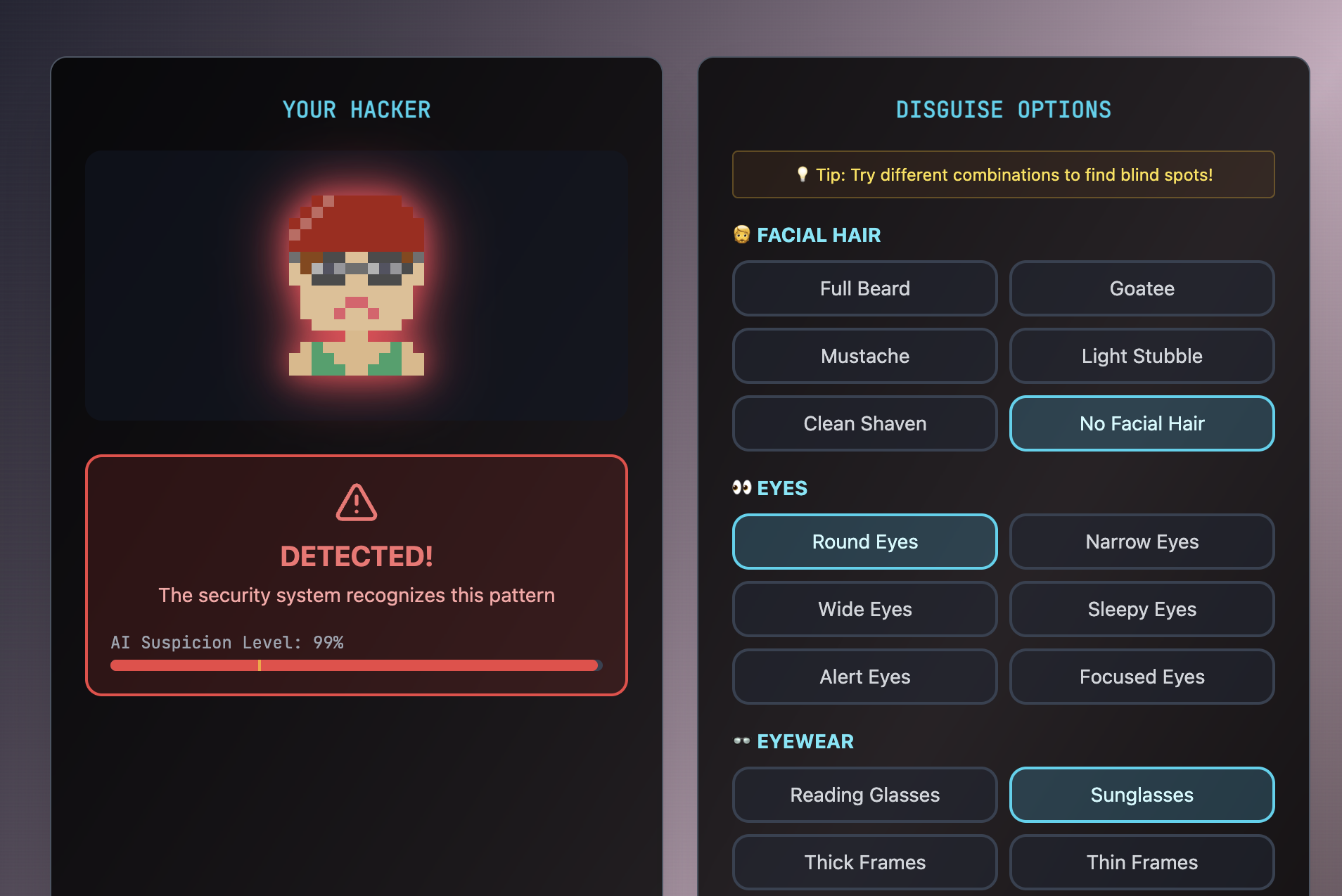

The Hacker: Exploiting Blind Spots

Then comes the twist. Players switch sides, becoming hackers trying to sneak past the very security system they just trained. They choose the models their friends trained. Suddenly, the mystery doubles.

"The computer thinks everyone with sunglasses and a beard is a hacker!" one student observed, immediately grasping that she could exploit this pattern by choosing different features.

The Beautiful Moment of Recognition

Watching children play this game has been revelatory. Here are actual moments from gameplay sessions:

The Pattern Recognition "Wait, I marked everyone with glasses as suspicious. That's not fair—my friends wear glasses too!"

The Data Bias Discovery "The computer is dumb! It thinks all people with beanies are bad because I showed it three bad guys with beanies!"

The Representation Revelation "I don't have enough people with hoodies in my dataset!"

The Unconscious Becomes Conscious

The most powerful moments come when children recognize their own biases:

- "I didn't mean to, but I kept marking people with glasses as suspicious"

- "I think I was choosing people who look like movie villains"

- "The beanie faces seemed more hackery!"

We also talked about how recommendation algorithms decide based on limited evidence. For example: "This is like when YouTube keeps showing me the same type of videos because I clicked on one cat video."

Beyond Beanies and Sunglasses: Real-World Connections

While kids initially laugh at the obvious patterns ("Beanies = Hackers"), deeper conversations emerged like, "Why someone always gets 'randomly' selected at airports?", a question that probed into profiling.

Teaching Moments That Emerged

1. Bias Isn't Always Intentional

Kids quickly learn that they didn't mean to create unfair systems—it just happened through rushed decisions and limited examples.

2. Data Diversity Matters

When one group consciously tried to include diverse characteristics in their "suspicious" and "safe" categories, their AI performed more fairly.

3. Speed Pressures Increase Bias

The time limit forces snap decisions, mimicking real-world scenarios where bias thrives under pressure.

4. Algorithms Amplify Human Decisions

"The computer is just copying me, but worse!" perfectly captures how algorithms can amplify human biases.

The Gender Shades Legacy in Action

This game translates Joy Buolamwini's important research into experiential learning. Just as Gender Shades revealed bias in facial recognition, "Who is the Hacker" reveals bias in decision-making. But more importantly, it empowers kids to:

- Recognize bias in systems they interact with daily

- Understand how bias gets encoded into technology

- Question the fairness of algorithmic decisions

- Imagine more equitable alternatives

Building Better Builders

My hope is that students will now ask 'Who trained this?' whenever we talk about AI. They can probe technology rather than be passive consumers.

This critical lens is what what we need. As these children grow up to potentially build the next generation of AI systems, they carry with them the visceral memory of creating biased systems—and the knowledge of how to do better.

Class discussions

The game is just the beginning. It opens doors to discussions about:

- Why do we have the biases we do?

- How do movies and media shape our idea of what a "hacker" looks like?

- What would a fair security system look like?

- Who gets to decide what's "normal" or "suspicious"?

Try It Yourself

The game is freely available at saranyan.com/kls/who-is-the-hacker. Play it with your kids, your students, or by yourself. Pay attention to your patterns. Question your quick decisions. And remember: every time we train an AI, we're encoding our view of the world.

Inspired by Joy Buolamwini's Gender Shades and the Algorithmic Justice League. Built with the belief that understanding bias through play is the first step toward algorithmic justice.

Special thanks to the students who played, questioned, and taught us all something about fairness in the process.

Join the Discussion

Share your thoughtsJoin the discussion

I look forward to hearing your thoughts! Share your perspective, ask questions, or add to the conversation.