After the Detective Work: Charting the Future of AI Education

After the Detective Work: Charting the Future of AI Education

A reflection on winning the Global Dialogues Challenge and what comes next

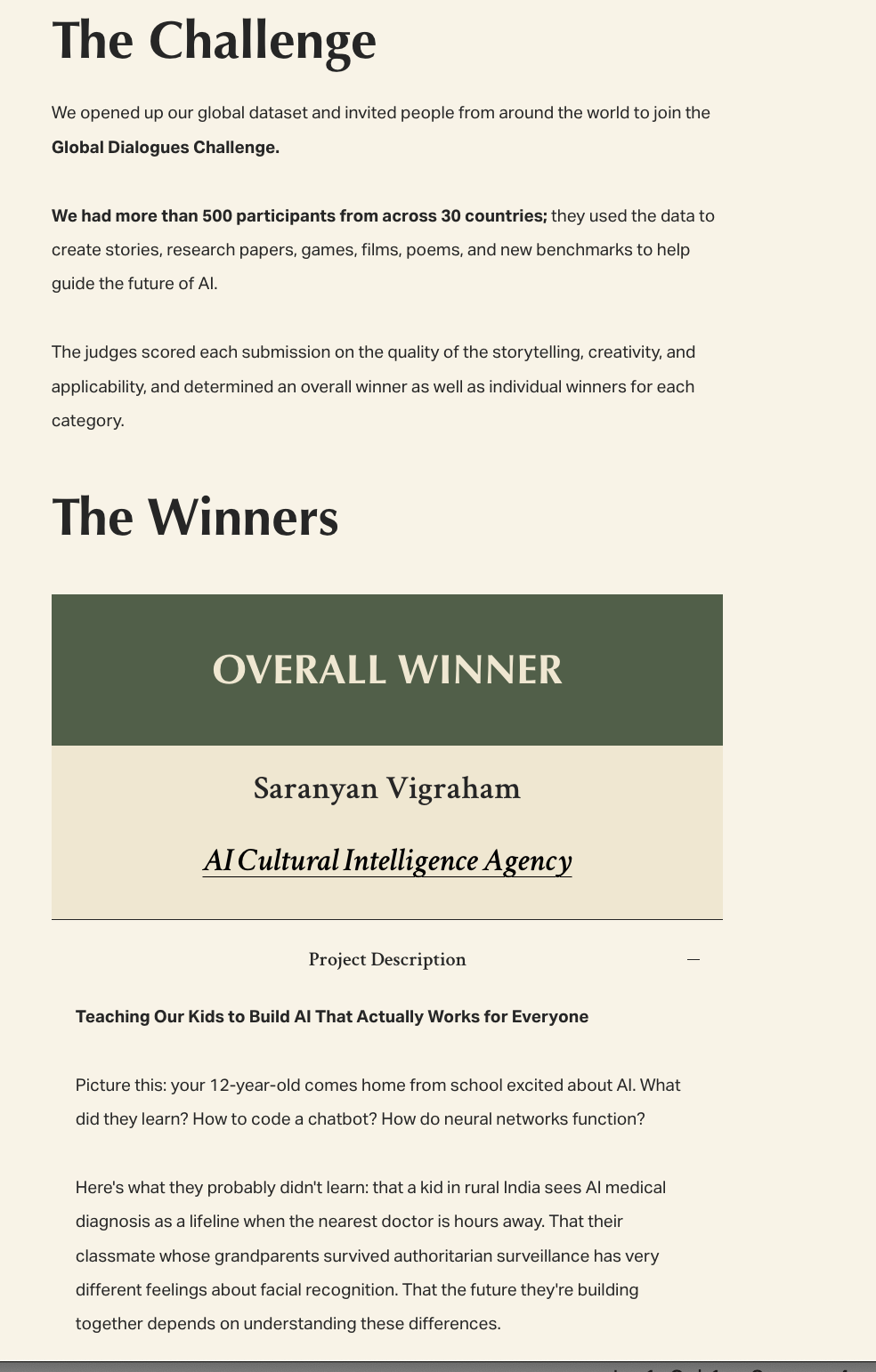

Looks like I won the Global Dialogues Challenge. Didn't expect it. Just wanted to build something fun.

Generally, my framework for what I work on is very simple. If I'm convinced that an idea will make the world a better place for my children to grow up in, I will work on it. That's how this project took birth. AI viewpoints today are shaped by a few people and they are lopsided, biased, and misguided. I have been looking for ways to change that.

Children are natural cultural anthropologists. They don't just accept that people think differently—they're genuinely curious about why. When my 13-year-old discovers their "Cultural Twin" is a country they've never heard of, they don't dismiss it. They investigate.

This curiosity is a superpower, and we're wasting it.

Right now, AI education focuses on the technical: neural networks, machine learning algorithms, coding frameworks. These are important, but they're just tools. We're teaching kids to build hammers without helping them understand what deserves to be built, torn down, or left alone entirely.

Meanwhile, the most critical AI decisions ahead of us are fundamentally human:

- Should AI tutors adapt to local cultural learning styles or promote universal approaches?

- How do we design healthcare AI that works for communities with different relationships to technology and authority?

- What happens when AI-powered hiring tools encounter cultures with different definitions of merit and potential?

These are important questions to ask.

The Education Revolution We Need

We need a new kind of AI literacy—one that starts with human understanding, not code syntax.

Imagine classrooms where:

- Cultural Detective Units pair students from different countries to investigate why their communities react differently to the same AI applications

- Bias Archaeology helps kids uncover their own assumptions before they embed them in algorithms

- Empathy Engineering teaches children to design technology by first understanding whose lives it will touch

- Global AI Exchange Programs connect young minds across continents to collaborate on solutions that work for all their communities

We don't need to add more subjects to an overloaded curriculum. We can transform how we teach the subjects that will matter most.

If you have played this game or have kids who did, you can maybe make your own creed like below:

Curiosity over Judgment: Teach children to investigate why people think differently, not to dismiss perspectives they don't immediately understand.

Questions before Answers: The right question asked by a 12-year-old is more valuable than the wrong solution built by a PhD.

Stories over Statistics: Data points represent human experiences. Help kids see the person behind every percentage.

Collaboration over Competition: The biggest challenges require diverse perspectives working together, not the smartest person working alone.

Empathy as Engineering: Understanding users isn't a nice-to-have—it's the foundation of technology that actually works.

An Invitation

To the educators reading this: you don't need to be an AI expert to start this investigation. You just need to help kids ask better questions. Why might your pen pal in Kenya feel differently about AI medical diagnosis than your neighbor does? What would it mean to design technology that works for both?

To the parents: your children are already digital natives navigating AI-powered apps and games. Help them become cultural natives too—curious about why their online friends from different countries might experience the same technology differently.

To the policymakers: invest in education that prepares young minds for an AI future, not just AI jobs. Fund programs that teach cultural intelligence alongside computational thinking.

To the technologists: the next generation of builders is watching. Show them that the most sophisticated algorithm is worthless if it doesn't understand the humans it's meant to serve.

Join the Discussion

Share your thoughtsJoin the discussion

I look forward to hearing your thoughts! Share your perspective, ask questions, or add to the conversation.